When DeepSeek hit the scene back in January, I started thinking about ways I could run large language models locally on my own hardware. It wasn't long after that I found out about Ollama, an open source framework that could do exactly that.

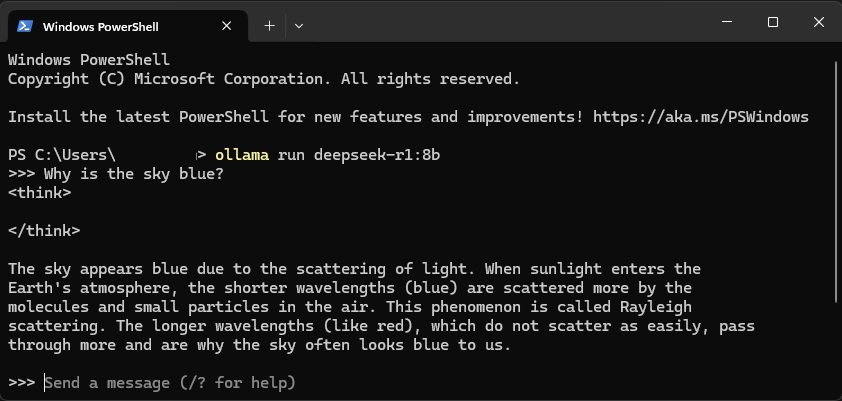

I repurposed a Zotac Magnus One mini PC that I bought a while ago for this project. I was using it to run weekly backups for my fileshare, but this computer came with an nVidia RTX 3070 and enough storage to make it perfect for running a handful of AI models. I installed Ollama in Windows 11 and ran it via PowerShell. From there, I pulled the 8b parameter DeepSeek model and began running queries.

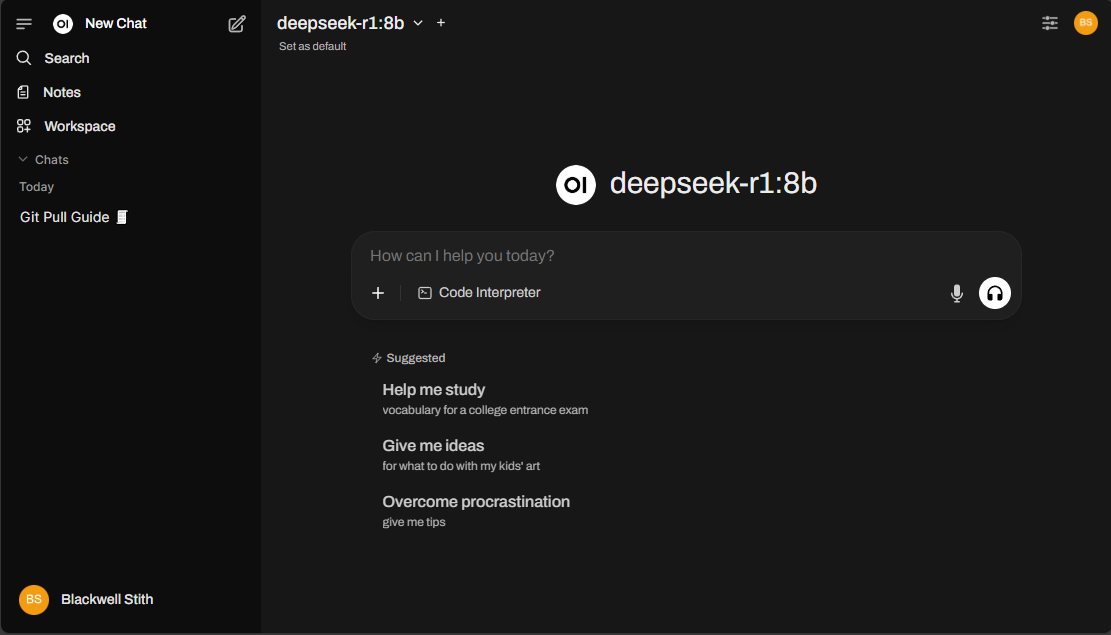

Using PowerShell was one thing, but what if I could set up a web portal to be able to utilize Ollama from multiple devices on my home network? Enter Open WebUI- a platform that, like its name suggests, provides a web interface experience similar to ChatGPT, Copilot, and Gemini for offline models running on local hardware and can be deployed using a Docker container.

I installed Docker Desktop on the same computer running Ollama, and pulled the Open WebUI container image. After tweaking a few settings to autorestart the container after a system restart, I navigated to the instance from my browser and set up a local account. From there, I was able to start using it without any additional set up.

I enjoy the convenience of being able to switch between models to compare their outputs, and the interface keeps a history of my conversations. I can also set system prompts for specific models, which helps refine their responses. Multiple accounts can be created, so you can allow other members of you living space to use Open WebUI while managing their access to downloaded models and keep their prompts separated.

Since setting this up, Open WebUI has created a Docker image that's bundled with Ollama that elimiates the need to configure the Windows Subsystem for Linux (which was a prerequisite until to now). There will probably come a time where I start from stratch and replace the hard drive in my computer with a larger one to fit larger models, and that would be a perfect time to reinstall Ollama and Open WebUI in the same container.